Most IT groups are drowning in knowledge however starved for perception. AI doesn’t change your crew, it provides them the readability to behave earlier than issues go flawed.

Think about this state of affairs:

2:47 AM — Tuesday

A worldwide financial institution’s buying and selling platform begins slowing down. Latency creeps up. Databases stall. Three crucial servers quietly strategy their limits.

3:14 AM — Platform down.

The autopsy revealed the worst half: each warning signal had been sitting within the knowledge for 72 hours. Uncommon question patterns. A key API operating 40% slower than regular. The information was there. The monitoring was operating. No alert fired. Nobody linked the dots in time.

This isn’t a uncommon edge case. In line with Gartner, 70% of IT outages are attributable to modifications that produce detectable alerts nicely earlier than failure. The issue isn’t a scarcity of information, it’s a scarcity of capability to make sense of it, quick sufficient to matter.

At NimbleWork, we name this the intelligence hole: the rising distance between the alerts your IT atmosphere produces and your crew’s capability to behave on them in time. As cloud infrastructure, hybrid methods, and shopper SLAs develop extra advanced, that hole retains widening and conventional instruments and processes should not constructed to shut it.

AI doesn’t clear up this by changing your engineers or service managers. It solves it by ensuring the best info reaches the best individuals, quick sufficient to behave on. Listed below are the 4 areas the place AI makes the most important sensible distinction in IT service supply, and the way NimbleWork places them to work for among the world’s most demanding organisations.

01. Smarter standing reporting: all the time present, by no means late

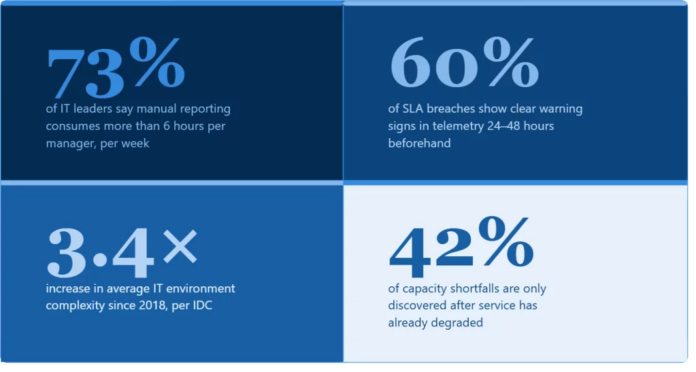

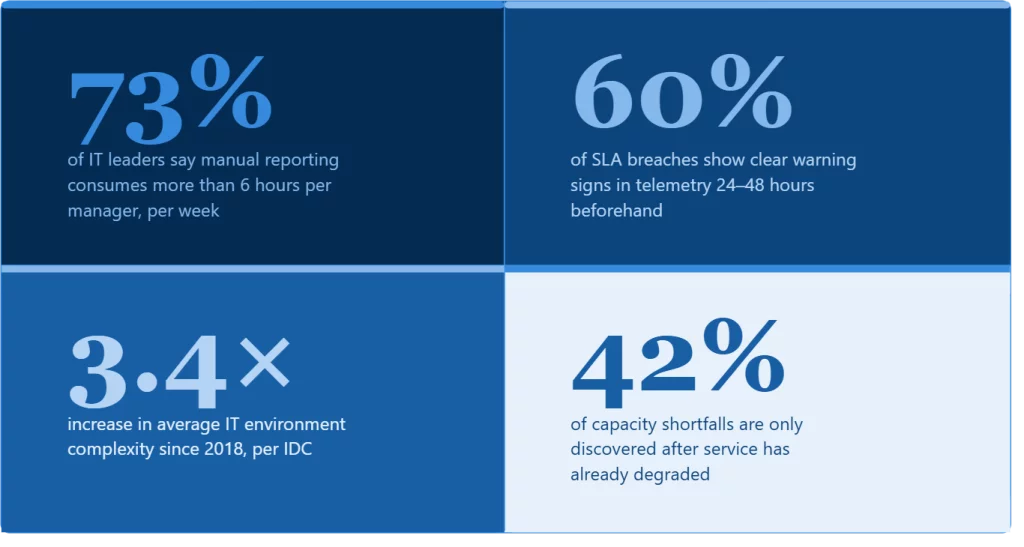

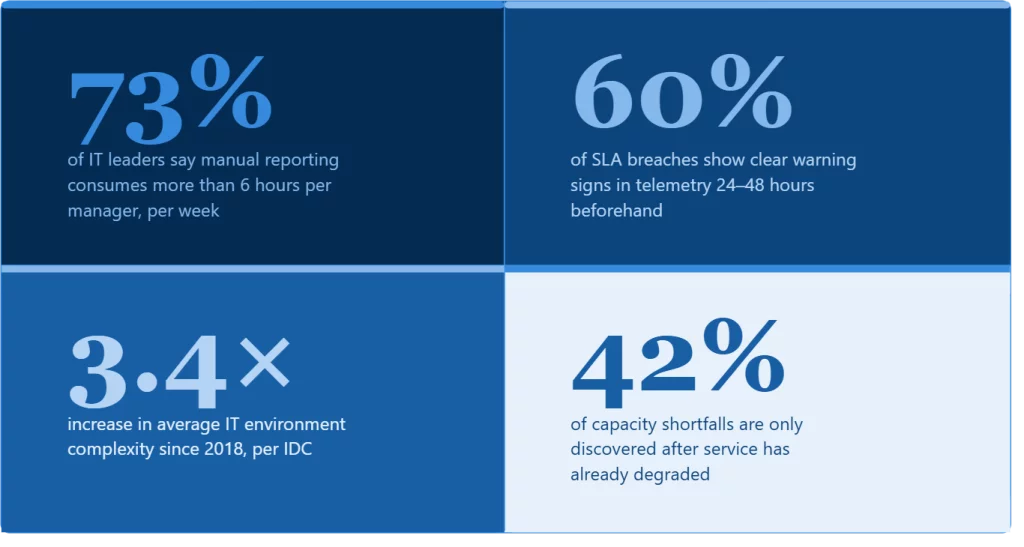

Ask any service supply supervisor how a lot time their crew spends constructing standing studies every week. The reply is all the time some variation of “an excessive amount of.” Then ask how assured they’re that these studies are correct. The reply modifications quick.

The core downside is structural. A weekly report is {a photograph} of a transferring scene. By the point it’s pulled collectively, formatted, reviewed, and despatched incidents have advanced, ticket queues have shifted, SLA positions have modified. The report tells stakeholders the place issues had been, not the place they’re. And in IT service supply, that lag is strictly the place issues go flawed.

AI fixes this on the root. As an alternative of ready for a human to manually extract knowledge from ServiceNow, Datadog, Jira, and three different instruments each week, NimbleWork’s AI reporting layer retains a constantly up to date image of your atmosphere and surfaces the best model of that image to every viewers mechanically.

Pull knowledge from all over the place, mechanically

Your ITSM, monitoring instruments, cloud consoles, and venture trackers feed into one unified view no handbook copying, no lag, no reconciliation.

Flip numbers into plain-language narratives

AI doesn’t simply mixture — it interprets. It spots tendencies, flags anomalies, and produces summaries that learn like a senior analyst wrote them.

Ship the best model to the best viewers

Your CTO will get a two-paragraph government abstract. Your community engineer will get an in depth breakdown. Your shopper will get a plain-English replace. All from the identical underlying knowledge, generated concurrently.

The largest shift: studies cease being tied to a calendar. As an alternative of fastened Monday updates no matter what’s taking place, AI triggers studies when one thing significant modifications, a service well being bulletin goes out inside minutes of a big deviation being detected, not on the finish of the week.

For Fortune 500 IT organisations managing dozens of vendor relationships and a whole lot of SLAs concurrently, this shift from scheduled to event-driven reporting is just not a minor comfort and it’s the distinction between catching a growing downside on Tuesday and discovering it in Friday’s report.

02. Proactive threat alerting: catch issues earlier than they develop into incidents

Conventional monitoring is constructed on thresholds. When a metric crosses a line, an alert fires. This strategy works for easy, apparent failures, a disk at 95%, a service returning errors. It breaks down fully for the much more frequent failure mode: gradual, multi-system drift the place no single quantity journeys the alarm, however the mixture is catastrophic.

AI provides a reasoning layer on prime of your monitoring stack. As an alternative of watching particular person numbers, it watches patterns studying what “regular” seems to be like for every part of your atmosphere, at each time of day, throughout totally different enterprise cycles. When issues drift, even subtly, it surfaces the danger earlier than any threshold is crossed.

Three particular alerts AI watches that human groups managing advanced environments merely can not monitor manually:

Infrastructure well being alerts

AI learns {that a} server at 78% reminiscence utilisation is completely regular at 2am on a Sunday and a severe threat sign at 9am on Monday throughout month-end processing. Context transforms the that means of each knowledge level.

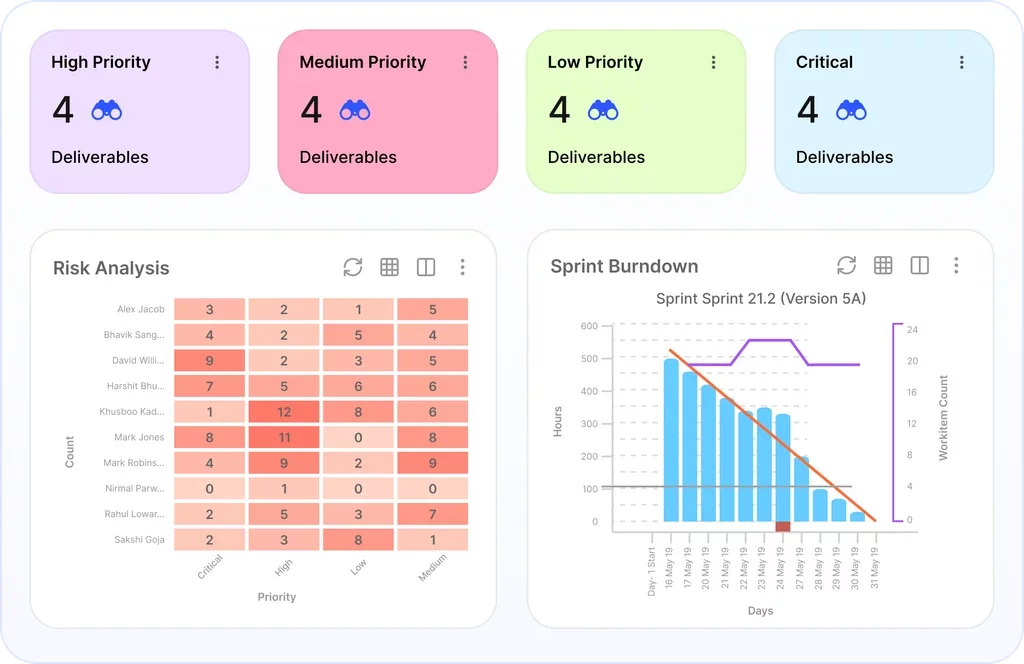

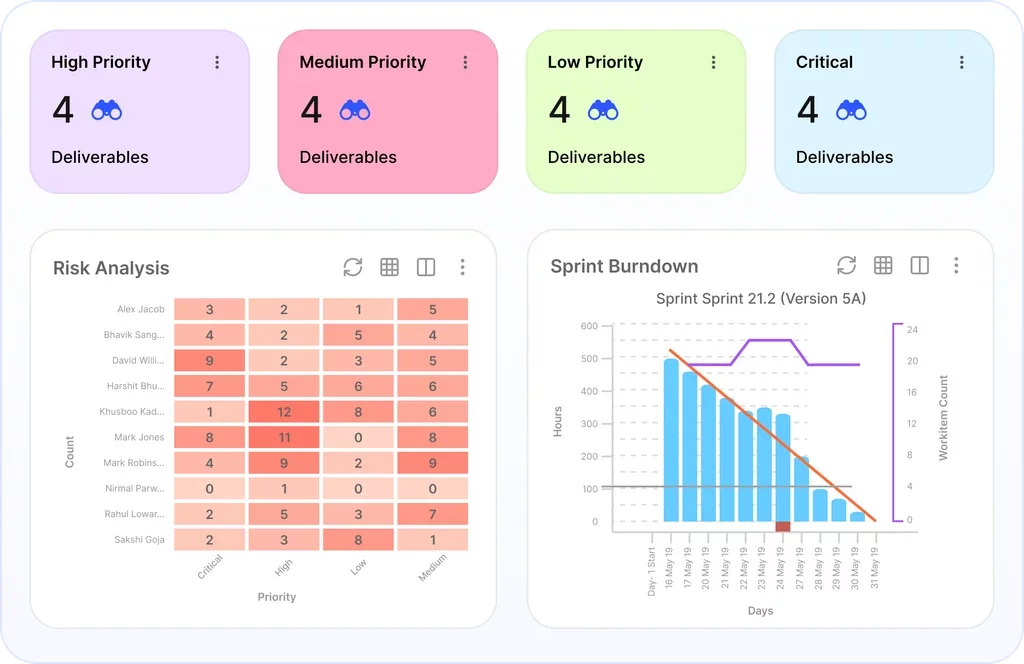

SLA breach predictions

Quite than logging breaches after they occur, fashions venture which tickets are more likely to breach hours earlier than the window closes primarily based on present quantity, crew capability, and historic decision patterns. Your crew will get a heads-up with time to behave, not a autopsy with blame to assign.

Third-party and dependency dangers

When a cloud API you rely on begins responding 40% slower than its 90-day baseline, that’s a threat sign even when nothing has visibly damaged but. AI catches these drifts early. Human groups managing a whole lot of dependencies can not.

Even earlier than AI entered the IT service supply dialog, main groups had been already making an attempt to unravel the identical downside: fragmented visibility. Nimble helped Teradata because the crew used Kanban throughout consulting companies, IT, and IT support-type tasks to enhance visualization, handle dependencies, and combine shopper, vendor, and third-party companies into supply cycles. AI takes this identical want one step additional — from seeing work clearly to detecting dangers, capability points, and SLA alerts early sufficient to behave.

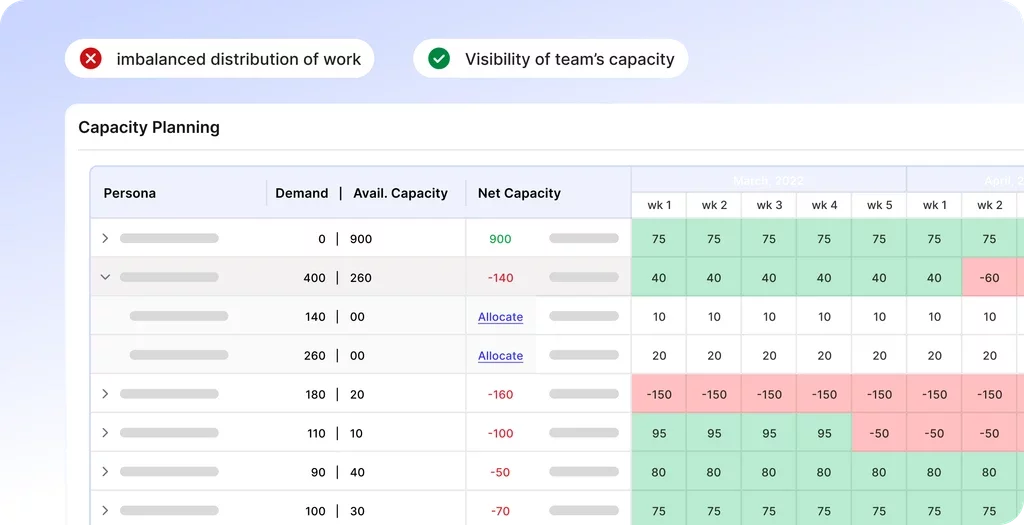

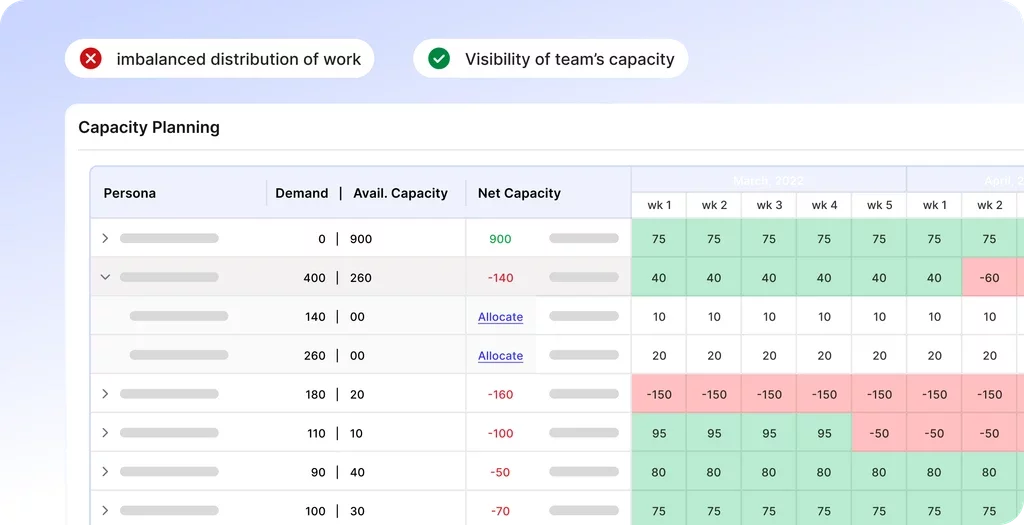

03. Smarter capability planning: cease guessing, begin forecasting

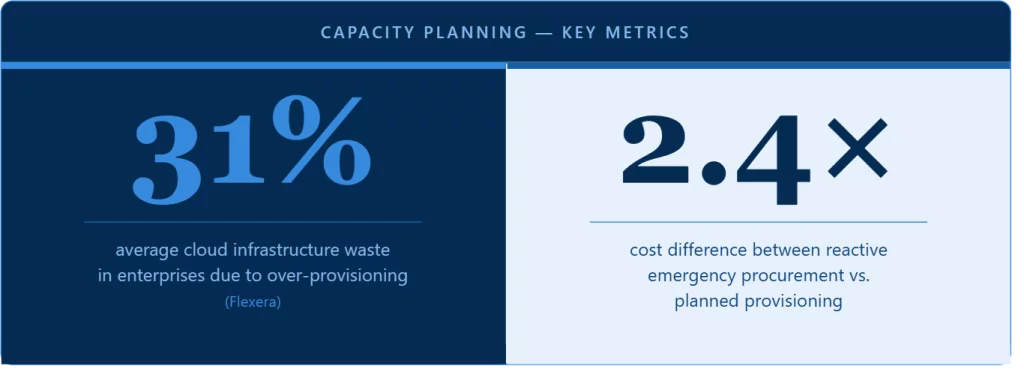

Capability planning has all the time been an uncomfortable train. Plan too conservatively and also you pay for infrastructure you don’t use. Plan too aggressively and also you scramble throughout peak demand, degrading service exactly when your shoppers are watching most intently.

The standard strategy, take a look at final yr’s knowledge, apply a development assumption, and add a security buffer was designed for secure, predictable environments. Trendy enterprise IT environments are neither.

AI replaces the static annual forecast with a constantly up to date demand mannequin. It ingests enterprise seasonality patterns, historic utilization knowledge, development alerts from gross sales pipelines, and real-time operational telemetry, concurrently. The consequence isn’t only a extra correct forecast. It’s a forecast that updates as circumstances change, moderately than ready for the subsequent quarterly overview cycle.

For NimbleWork prospects, this implies capability conversations shift from reactive firefighting to knowledgeable planning with forecast confidence scores, state of affairs modeling, and clear visibility into the place the subsequent bottleneck is more likely to seem, and when. Groups that beforehand found capability shortfalls after service had already degraded now see them coming weeks upfront.

The sincere caveat: AI capability fashions are excellent at predicting the long run primarily based on patterns they’ve seen earlier than. Novel situations, a significant shopper migration, an sudden product launch — nonetheless require human judgment. Use AI to deal with routine forecasting so your senior engineers can concentrate on the sting circumstances that truly want them.

04. Higher shopper communications: constant, well timed, and by no means an afterthought

Shopper communications are among the many most inconsistently executed elements of IT service supply and probably the most consequential. Updates get written after an incident is resolved, by engineers who’re drained and already transferring on to the subsequent downside. The result’s communications which can be technically correct however usually delayed, unclear, or pitched on the flawed degree for the viewers studying them.

AI addresses this by decoupling communication high quality from the circumstances below which it’s produced. The identical incident knowledge that powers your inside reporting can mechanically generate a client-facing replace, calibrated to the shopper’s technical degree, clear about enterprise influence, and prepared for human overview inside minutes of decision, not hours.

Past reactive updates, AI can detect early indicators of shopper dissatisfaction earlier than they floor as formal complaints. Sentiment evaluation throughout e mail threads, ticket tone, and survey responses can flag accounts heading towards escalation weeks upfront. NimbleWork’s platform surfaces these alerts so service supply managers can handle relationship points earlier than they develop into contract points.

Non-Negotiable

AI-generated shopper communications all the time want a human overview earlier than they exit. Tone errors or factual gaps in exterior messaging carry reputational threat that inside reporting errors don’t. This step is quick, a two-minute overview beats a two-hour rewrite after the very fact and it’s by no means optionally available.

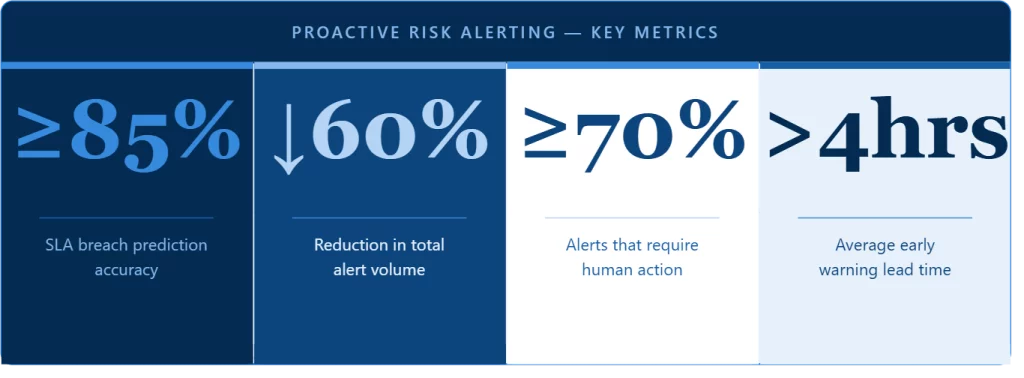

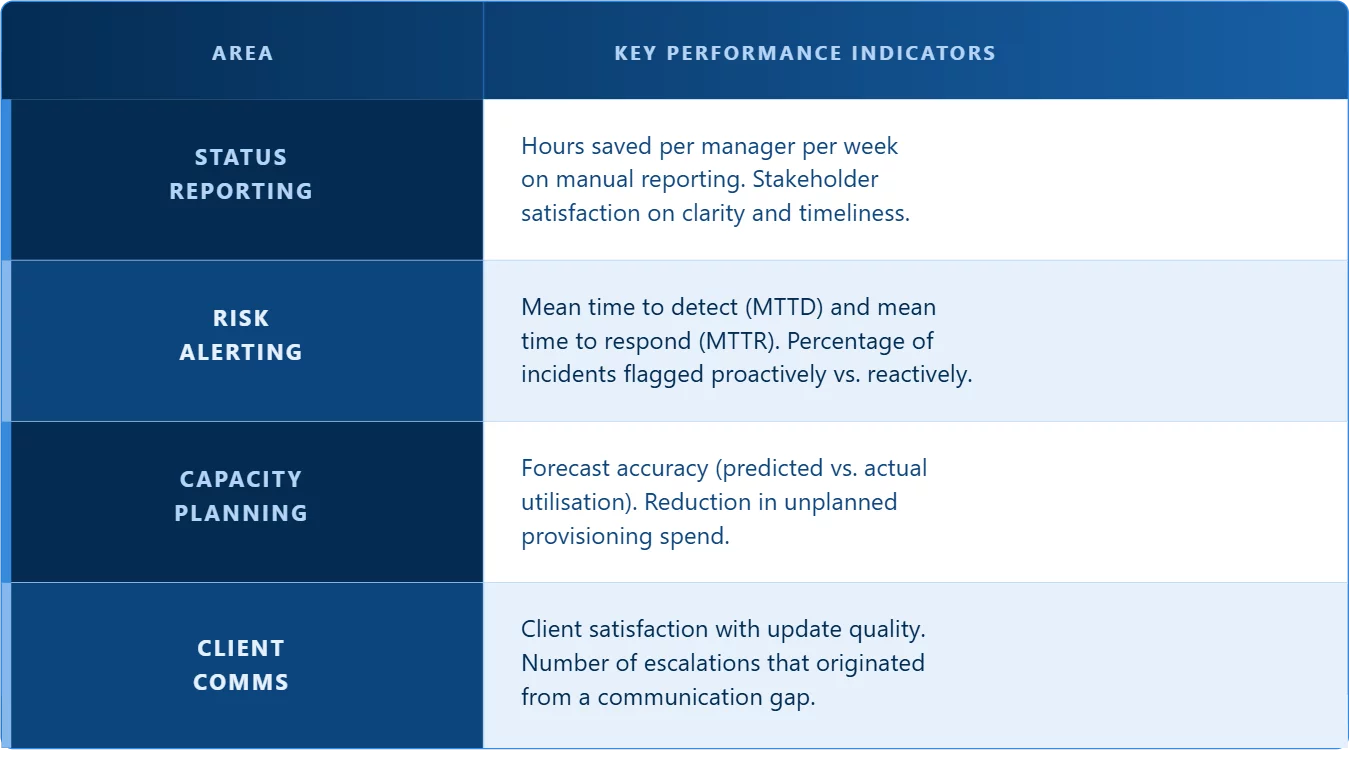

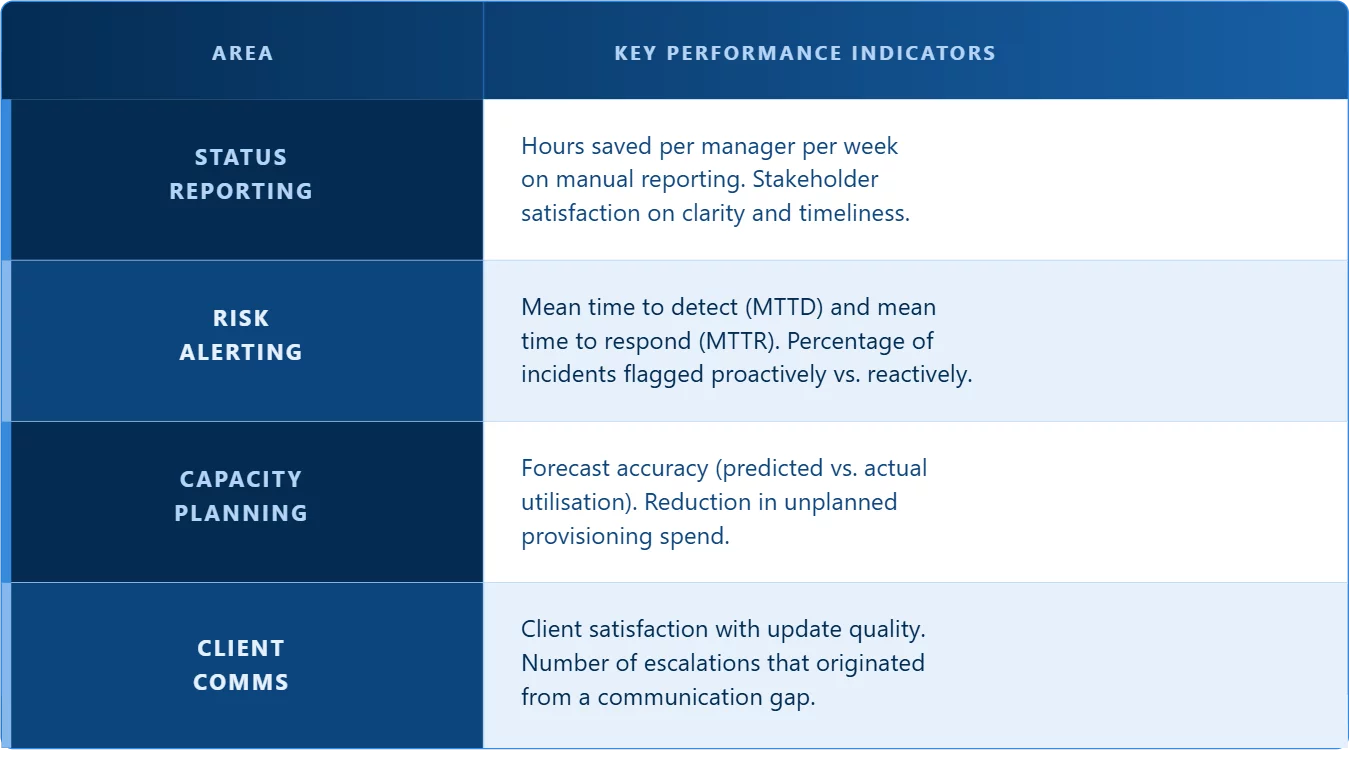

05. What to measure: KPIs that present actual influence

The commonest mistake groups make when measuring AI in service supply is monitoring exercise as a substitute of outcomes: what number of studies had been generated, what number of alerts fired. These numbers inform you nothing about whether or not something truly improved. Right here’s the outcome-focused measurement framework that issues:

One KPI price monitoring throughout all 4 areas: the human intervention fee, how usually AI outputs are corrected or overridden by your crew. A excessive correction fee in a particular space isn’t an indication that AI is failing. It’s a sign that the mannequin wants higher coaching knowledge or tighter guardrails there. Use it to enhance, to not decide.

06. Governance: protecting people in management

Most deployments of AI in IT service supply don’t fail as a result of the know-how is flawed. They fail as a result of governance is handled as one thing to determine later — and “later” by no means comes. Workflows get automated with out clear possession. When the mannequin makes a confident-sounding mistake, no one is aware of whose job it’s to catch it.

Efficient governance for AI-assisted operations comes down to 3 ideas:

Accountability stays with people

AI can draft, flag, and forecast. Each client-facing output and each escalation determination wants a named human proprietor. No exceptions, no matter how assured the mannequin seems.

Auditability from day one

Log what the mannequin really useful, what the human determined, and why they diverged. This isn’t only for compliance, it’s the first mechanism for bettering mannequin efficiency over time.

Scheduled calibration

AI mannequin efficiency drifts as your atmosphere modifications. Construct a quarterly overview cycle into your operations rhythm, not simply your incident playbook. Reactive calibration is simply too gradual.

Organisations that construct these foundations don’t simply get higher metrics. They construct the inner belief in AI tooling that enables them to responsibly develop its function over time transferring from assisted reporting to autonomous triage, from predictive alerts to genuinely self-healing infrastructure. That development doesn’t occur with out governance. With it, it’s a matter of when, not if.

The intelligence hole is actual, it’s rising, and it’s costing enterprises in downtime, wasted capability, and shopper relationships they will’t afford to lose. The excellent news: it’s additionally solvable with the best instruments, the best measurement framework, and the best governance in place from the beginning.

FAQs on AI in IT service supply

How is AI totally different from the monitoring and alerting instruments we have already got in place, like Datadog or PagerDuty?

Conventional monitoring instruments are threshold-based; they hearth when a single metric crosses a line. AI sits on prime of those instruments and provides a reasoning layer. It watches patterns throughout a number of methods concurrently, learns what “regular” seems to be like in context, and flags threat earlier than any threshold is crossed. The distinction is between a smoke alarm that triggers at 70°C and a system that notices the temperature has been rising steadily for six hours and warns you earlier than it turns into a hearth.

We’ve tried AI-powered instruments earlier than and the alert noise received worse, not higher. How is that this strategy totally different?

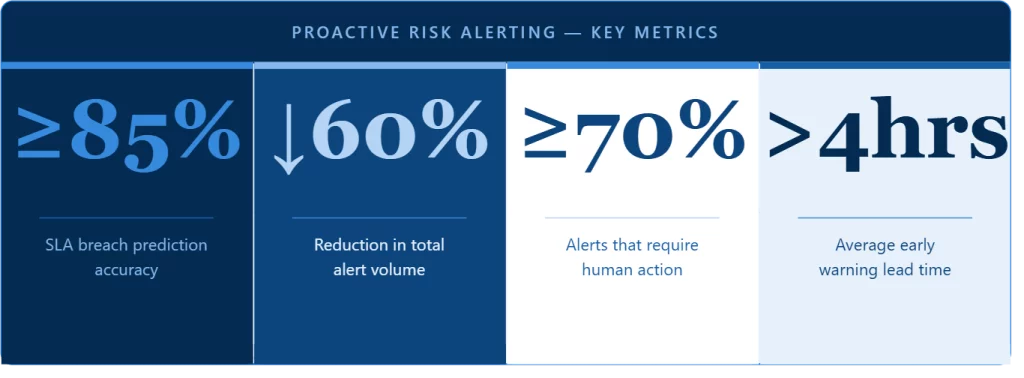

Alert fatigue often occurs when AI is layered on prime of current monitoring with out correct tuning, amplifying noise as a substitute of filtering it. The proper strategy inverts this: AI ought to scale back complete alert quantity by filtering false positives, self-resolving occasions, and duplicates earlier than they attain your engineers. In mature deployments, groups sometimes see alert quantity drop 60% whereas the proportion of alerts that truly require motion stays above 70%. In case your earlier expertise was the other, the mannequin wasn’t configured appropriately in your atmosphere. Not a flaw within the strategy itself.

Our crew is already stretched skinny. How a lot work does it truly take to implement and preserve this, and who owns it?

Preliminary setup takes just a few weeks: integrating together with your current software stack, establishing baselines, and setting governance guidelines. Ongoing upkeep is lighter however not zero. Mannequin efficiency drifts as your atmosphere modifications, so a quarterly overview cadence is crucial. Possession sometimes sits with a service supply lead, not an information science crew. Most organisations see a web discount in crew workload inside the first quarter, as soon as implementation effort is accounted for. However provided that the setup is completed correctly.

How do I make the enterprise case for AI in IT service supply to management that’s skeptical of AI hype?

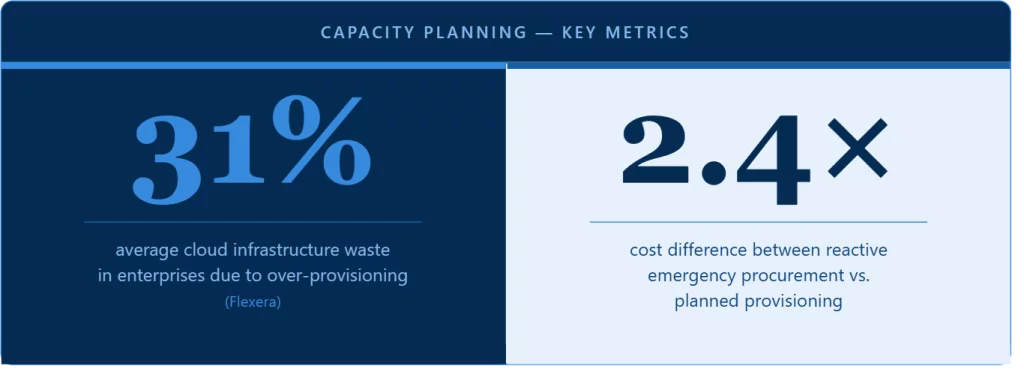

Skip the hype and lead with prices management already is aware of. What did your final main unplanned outage price in engineering hours, shopper credit, and reputational injury? Then level out that 70% of outages produce detectable alerts nicely earlier than failure. Add the operational numbers: 31% common cloud infrastructure waste from over-provisioning, 2.4× price premium on emergency capability procurement, 6+ hours per supervisor per week on handbook reporting. Construct the case round what you’re at the moment spending on preventable failures. Not round what AI guarantees to ship.

How do you handle AI making a false prediction or lacking an incident?

This can occur, and good governance exists exactly because of this. Nothing client-facing goes out with out human overview. No exceptions. For inside predictions and alerts, the human intervention fee (how usually AI outputs are corrected) is tracked as a core metric and used to enhance the mannequin over time. Each divergence between what the mannequin really useful and what the human determined is logged. Consider AI like a succesful junior analyst: helpful, usually proper, however all the time supervised.

How will we ensure AI-generated shopper communications don’t injury relationships?

Human overview earlier than something goes out externally is non-negotiable. A two-minute test by a service supply supervisor prices nothing in comparison with the fallout from a tone-deaf communication after a significant incident. Past that, the mannequin ought to be educated on examples out of your finest service supply managers, not generic templates, and calibrated to every shopper’s technical degree and communication preferences. Sentiment evaluation on ongoing e mail and ticket threads may also flag deteriorating relationships weeks earlier than a proper grievance lands.

How does AI capability planning deal with conditions it has by no means seen earlier than: a sudden massive shopper migration, an sudden product launch?

It doesn’t, and any sincere reply has to acknowledge this. AI capability fashions are pattern-based; they forecast by extrapolating from historic knowledge. Novel situations require human judgment no mannequin can replicate. The proper strategy is to make use of AI for the 80% of capability planning that’s predictable and routine, liberating your senior engineers to concentrate on the sting circumstances that truly want their experience. Human overview of capability forecasts forward of main enterprise occasions ought to all the time be a part of the method.

We function throughout a number of cloud suppliers and on-premise infrastructure. Does AI work in that sort of advanced atmosphere?

Hybrid and multi-cloud environments are the place AI provides essentially the most worth, as a result of the complexity far exceeds what human groups can monitor manually on the required granularity. The important thing requirement is knowledge integration: the AI layer must ingest telemetry from all of your environments, not only a single platform. NimbleWork is constructed to combine throughout AWS, Azure, GCP, and on-premise methods concurrently. The extra heterogeneous your atmosphere, the bigger the intelligence hole and the bigger the potential influence of closing it.

How lengthy does it realistically take to see measurable outcomes?

The primary 30 days are setup and baseline institution; the mannequin wants time to study your atmosphere earlier than it might probably distinguish sign from noise. Days 30 to 60 is the place early wins seem: fewer handbook reporting hours, first proactive alerts, preliminary capability forecasts. By day 90, groups with correct setup sometimes see measurable reductions in MTTD and MTTR and clear reporting time financial savings. The organisations that see outcomes quickest deal with the primary 90 days as an energetic implementation, with clear possession, an outlined overview cadence, and a willingness to right the mannequin when it’s flawed.

How will we stop our crew from changing into over-reliant on AI and shedding the institutional data that makes our service supply efficient?

That is crucial long-term governance query. Make AI outputs clear; engineers ought to perceive why a flag was raised, not simply that it was raised, so that they construct sample recognition alongside the mannequin. Hold the human correction course of energetic, not passive. And watch the human intervention fee over time: if it drops to close zero, that’s not an indication of success. It means individuals have stopped partaking critically with AI outputs, which is strictly when mannequin drift turns into harmful. AI ought to increase institutional data, not quietly change it.